Digital transformation, a phenomenon that has intensified with the COVID-19 pandemic, has reshaped the corporate world and society. In this scenario, technologies such as Big Data and Artificial Intelligence (AI) emerge as fundamental pillars, boosting the productivity and competitiveness of organizations.

Both have as one of the most striking factors the more strategic use of data for organizations to increase productivity and competitive potential. Undoubtedly, this is an aspect that makes these two tools extremely valuable in the current scenario.

On the other hand, they create a relationship of dependence, which makes it necessary to take measures to ensure the optimal functioning of these resources. Therefore, the software governance is a fundamental process for companies.

In this article, we will expose details about these two solutions and how they together have impacted several companies around the world. Check out!

Discover the link between these two technologies

It is worth noting that both have a very close relationship, because Big Data is a data source for Artificial Intelligence, which needs consolidated information to be properly applied in various segments of the economy (industry, marketing, sales, logistics, among others). ).

But why does this happen? The answer starts with the fact that Big Data is capable of collecting a great quality of data that circulates on the web. In this way, it makes it possible to use the information to analyze and predict trends, which is very important, for example, to launch new products and services.

From all the information obtained by Big Data, it is possible to get an idea of how a person browses the web, which content they most search for on a social network and what their shopping habits are when accessing the internet.

As much as human beings make an effort to obtain and analyze data, it is impossible to do this activity with the speed, quality and efficiency expected due to the explosion of information circulating in the online world. In other words, a technological apparatus is necessary for decision-making to be the best possible.

Therefore, Artificial Intelligence has gained great prominence in recent times. One reason is that AI enables the automation of data identification and analysis processes. In addition, it enables the deployment of machine learning that consists of constant machine learning.

With this feature, a piece of equipment, as it receives information, becomes capable of performing a series of tasks that were previously restricted only to human beings. Due to AI, the industrial sector has automated production, which helps to increase the number of items manufactured and reduce labor costs. The use of Big Data and Artificial Intelligence are mainly responsible for the elimination of several manual activities in an industry and for enabling the use of corporate management systems, which work with updated information to facilitate decision making.

Learn more about Big Data

It is undeniable that data collection is key for a company to make the most of the potential of information. For you to understand this better, let's point out the 5 Vs that guide Big Data. Follow up!

Volume

It consists of the ability to manage a large volume of data, something essential due to the immense amount of information that is on the internet. With mobile devices and the presence of multiple platforms, it is necessary to have a tool capable of quickly collecting, storing and processing data.

Variety

There are several types of data that are managed by Big Data and this is essential for the enrichment and quality of analysis.

Speed

For a decision making to be accurate and fast, it is essential that the information is available in the short term, that is, in real time. This only happens nowadays thanks to the dynamism of Big Data and AI.

veracity

It is very important to have technological resources available that enable complex analyses. On the other hand, this only becomes a competitive advantage when the data obtained is reliable, that is, the information is useful and comes from a known source.

Value

It is also worth noting that there is no point in collecting a huge range of data if they do not meet the needs of companies. With a state-of-the-art technological apparatus, it is possible to count on mechanisms capable of offering strategic and vital information for the growth of a business.

Learn more about the power of Artificial Intelligence and Big Data

The corporate world no longer has room for decisions made solely on the basis of experience and managers' personal impressions of the market. If a corporation still relies on this procedure to achieve more expressive results, it has serious chances of losing money and customers.

Currently, companies want to have more predictability on the performance of actions taken. For this to be achieved, the adoption of AI and Big Data is essential because these technologies make it possible to use data to evaluate scenarios, automate processes and make more qualified decisions from the insights generated.

It is also worth mentioning that these two technological features can be integrated with other technologies. A clear example is the Internet of Things (IoT), which allows equipment to exchange data and communicate through wireless networks.

Analyze

In the industrial sector, IoT-connected machines can collect real-time data during production. As a result, a conveyor shaft provides a Big Data system with information on factors such as humidity, speed, temperature, vibration, among others.

Based on the forwarded data, Artificial Intelligence has the necessary subsidies to analyze the data. Thus, it can indicate, for example, the need for maintenance because of a problem identified in the equipment. This makes it simpler to carry out preventive repairs, preventing prolonged service downtime and a drop in productivity.

Big Data and Artificial Intelligence also add value in other segments of the economy. In the case of marketing, the two tools allow you to collect and analyze a series of data from consumers on social networks, on the corporate website or in business apps.

Based on the information collected, an entrepreneur is better able to verify the opportune moment to carry out a promotion with a focus on increasing sales. In addition, it is possible to verify, in a more consistent way, if the launch of a new product or service is able to reach goals.

By accurately checking the engagement level of the target audience, a company will have more guarantees that customers will be interested in consuming current and upcoming products. This, undeniably, has an influence on sales policy.

Check out the differentials that these technologies generate for your business

Innovation is no longer a great differential and has become an obligation for organizations. In other words, the adoption of this concept became necessary to keep the focus on continuous improvement and the ability to add more value to consumers.

AI and Big Data are part of this context because of their ability to make valuable information available to various sectors of a company. In the case of the stock area, it is possible to have a dimension of the items that have been idle for the longest time, which allows integration with the sales area. This practice helps to think about actions aimed at marketing products stocked for a longer time.

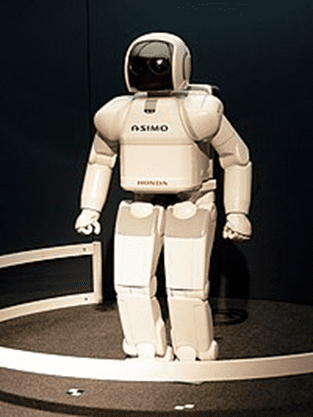

Nor can we ignore the fact that these two technologies contribute to the adoption of virtual robots, which are already commonplace in many enterprise websites and applications. These assistants allow for a more efficient and faster service, which contributes to improving the relationship and definitively inserting a brand in the digital transformation.

Historic

The concept of artificial intelligence is not contemporary. Aristotle, teacher of Alexander the Great, aimed to replace slave labor with autonomous objects, this being the first reported idealization of Artificial Intelligence, an idea that would be explored much later by computer science. The development of this idea took place fully in the 20th century, with a focus on the 50s, with thinkers such as Herbert Simon and John McCarthy. The early years of AI were full of successes – but in a limited way. Considering the first computers, the programming tools of the time, and the fact that only a few years earlier computers were seen as objects capable of performing arithmetic operations and nothing else, it was surprising that a computer would perform any remotely intelligent activity.

The initial success continued with the General Problem Solver (General Troubleshooter) or GPS, developed by Newell and Simon. This program is designed to mimic human problem-solving protocols. Within the limited class of puzzles it could handle, it was found that the order in which humans tackled the same problems. Thus, GPS was perhaps the first program to incorporate the “think humane” approach.

From the beginning, the foundations of artificial intelligence have been supported by various disciplines that have contributed ideas, viewpoints and techniques to AI. Philosophers (since 400 BC) have made AI conceivable, considering the ideas that the mind is in some ways similar to a machine, that it operates on knowledge encoded in some internal language, and that thought can be used to choose the actions to be performed. In turn, mathematicians provided the tools to manipulate logical certainty statements as well as uncertain and probabilistic statements. They also set the foundation for understanding computation and reasoning about algorithms.

Economists have formalized the problem of making decisions that maximize the expected outcome for the decision maker. Psychologists have embraced the idea that humans and animals can be considered information-processing machines. Linguists have shown that language use fits this model. Computer engineers provide the artifacts that make AI applications possible. AI programs tend to be extensive and could not function without the great advances in speed and memory that the computer industry has provided.

Today, AI encompasses a wide variety of subfields. Among these subfields is the study of connectionist models or neural networks. A neural network can be seen as a simplified mathematical model of how the human brain works. It consists of a very large number of elementary processing units, or neurons, that receive and send electrical stimuli to each other, forming a highly interconnected network.

In processing, the stimuli received are composed according to the intensity of each connection, producing a single output stimulus. It is the arrangement of interconnections between neurons and their respective intensities that define the main properties and functioning of an RN. The study of neural networks or connectionism is related to the ability of computers to learn and recognize patterns. We can also highlight the study of molecular biology in an attempt to build artificial life and the area of robotics, linked to biology and seeking to build machines that house artificial life. Another subfield of study is the link between AI and Psychology, in an attempt to represent reasoning and search mechanisms in the machine.

In recent years, there has been a revolution in artificial intelligence work, both in content and methodology. It is now more common to use existing theories as a foundation rather than proposing entirely new theories, to base information on rigorous theorems or rigid experimental evidence rather than relying on intuition and highlighting the relevance of real applications rather than toy examples.

The use of AI not only allows us to achieve significant performance gains, but also enables the development of innovative applications capable of expanding our senses and intellectual abilities in extraordinary ways. Artificial intelligence is increasingly present, simulating human thought and spreading throughout our daily lives. In May 2017, ABRIA (Brazilian Association of Artificial Intelligence) was created in Brazil with the aim of mapping Brazilian initiatives in the artificial intelligence sector, encompassing efforts among national companies and training specialized labor. This step reinforces that, currently, artificial intelligence has an impact on the economic sector.

Research in experimental AI

Artificial intelligence began as an experimental field in the 1950s with pioneers such as Allen Newell and Herbert Simon, who founded the first artificial intelligence laboratory in Carnegie Mellon University, and McCarty who together with Marvin Minsky, who founded the MIT AI Lab in 1959. They were among the participants in the famous 1956 summer conference at Dartmouth College.

Historically, there have been two major styles of AI research: “neats” AI and “scruffies” AI. “Neats” AI is clean, classical or symbolic. It involves the manipulation of symbols and abstract concepts, and is the methodology used in most expert systems.

Parallel to this approach there is the “scruffies” or “connectionist” AI approach, from which the neural networks are the best example. This approach creates systems that attempt to generate intelligence through learning and adaptation rather than creating systems designed specifically to solve a problem. Both approaches appeared at an early stage in AI history. In the 60s and 70s, connectionists were removed from the forefront of AI research, but interest in this strand of AI resumed in the 80s, when the limitations of “clean” AI began to be realized.

Artificial intelligence research was heavily funded in the 1980s by the Defense Advanced Research Projects Agency in the United States and the Fifth Generation Project in Japan. The funded work failed to produce immediate results, despite grandiose promises from some AI practitioners, leading to significant cuts in government funding in the late 1980s and a subsequent slowdown in activity in the field, known as the AI Winter. Over the next decade, many AI researchers shifted to areas with more modest goals, such as machine learning., robotics and computer vision, although research into pure AI continued at reduced levels.

Tags: ServiceNow, Snow Software, Software Asset Management, Software Asset Management, SAM, FINOps, ITAM, ITSM, Flexera, Cloud Management governance framework, asset management system, software licensing, software asset, software asset management, lifecycle management, natural language processing, natural language, artificial intelligence in brazil, digital marketing, use of artificial intelligence, ai can help, objective of artificial intelligence, customer experience, ai solution, term artificial intelligence, intelligence applications, decision making, success stories, human intelligence, future of artificial intelligence, complex data, artificial intelligence is being, virtual reality, ibm Watson, knowledge base, playing chess, data base, autonomous vehicles, ai solutions, uk, artificial is being used, personal assistants, natural language pln, virtual assistants, examples of artificial intelligence, intelligent systems entities, main objective, turing test, databases, public sector, field of study, intelligent machines, weak artificial intelligence, strong artificial intelligence, customer service, science fiction, human resources, strong ai, being used, alan turing, human capability, artificial intelligence applications, information technology, weak ai, datasets, use artificial intelligence, harvard business, ai techniques, fields of artificial intelligence, world war, google photos, specific task, history of artificial intelligence, talk us, amount of data, what are the types, types of artificial intelligence, abnt rules, john mccarthy, AI artificial intelligence, social networks, marvin minsky, data analysis, analyze data, human brain, neural network, ai researchers, internet of things iot, allen newell, computer science, ai applications, competitive advantage, data volume, reducing costs, building systems.